I very recently quit a teaching program that I have been part of for more than four years, to which I had a personal connection after all those years. Besides it being lucrative, it was also a program that was for a good part shaped by me and colleagues and which was fun and fulfilling to teach. Why quit, you might ask.

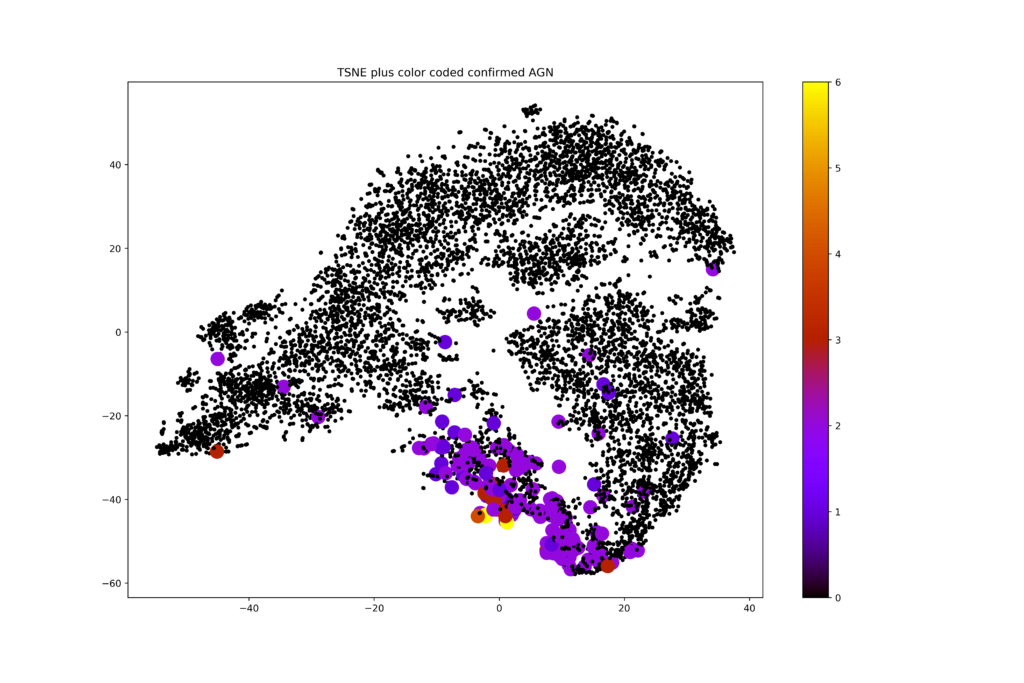

The program, consisting of modules for data scientists, analytics translators and managers/executives, was a collaboration between a consulting company, Ortec, and the Amsterdam Business School, a department of the University of Amsterdam that organizes a lot of executive education. The data scientist program was ruthlessly killed about a year ago, because of fierce competition from online platforms. Face-to-face education is more effective and in my opinion worthwhile, but if you can’t offer the right program for the right price, then it isn’t weird to decide not to. That so many people think you can learn such skills through watching a few videos and typing in two lines of code in a automatically checking code interpreter continues to amaze me and I bet we will see the devastating effects of this, now still junior, generation of Youtube data scientists in due time. But I digress.

The module for analytics translators is still alive and just these past few months I have still been teaching it. Fulfilling as always, I spent two half days in lecture rooms in hotels with a group of enthusiastic participants. The program this time was already rather different from what it had been before, and with the feedback of the current cohort, leadership decided to do another round of modifications.

Do not misunderstand me: continuously updating your educational offerings is what a good teacher does. Incorporating feedback from participants (or students) is crucial, as only they can properly judge whether your efforts help them reach their learning goals. One quote of the program director in the process made me scratch my head though:

I am just trying to design a program that I can sell.

Sure, selling your program is important, as otherwise there is no program. I get that. And for consulting companies (this program director is not with the consulting party in the collaboration) this may be the most or even only viable way of running business. I think, though, that people come to trusted educational institutions like universities for a different reason. A university does not design a curriculum for sales. It sets learning goals (which may well be (job-) market informed!) and then designs an educational pathway to best reach those goals. What people need to learn is determined by where they want to end up, not by what is a sexy set of courses that happens to be easily marketed.

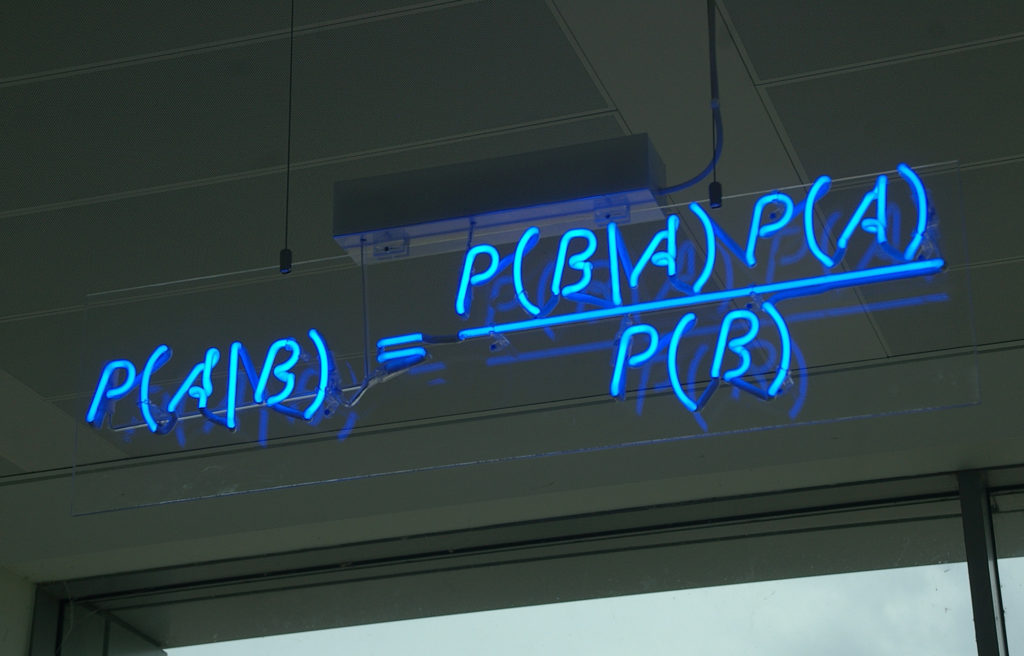

Teachers are teaching what they teach for two reasons. Firstly, they want to convey knowledge and skills that they have to students who want to learn. They think about the right educational means to help the students gain the knowledge and master the skills. Secondly, they are specialists in the field in which they teach, which means that they understand like no other what is necessary to learn, before one can become a specialist in that field, too. Few astronomers truly enjoy the first-year linear algebra they need to master and rarely do psychologist enjoy their statistics classes in undergrad, but these happen to be crucial ingredients to grow into the field that you want to be part of.

Besides the communication between the various people in this program detoriating to levels that I didn’t want to accept anymore, the fact that the curriculum went from specialist-informed to marketing and sales opportunity informed was the straw that broke the camel’s back. I want to be a proud teacher. Proud teachers design a program that is the best they can do to help students reach meaningful goals. You are very welcome to set learning goals based on all kinds of arguments, including sales, but once the learning goals are set, you should trust the professional’s teaching experience to manufacture a great, fun, and helpful course or program. It will be better for everyone’s motivation.

PS. More on my new academic role soon, presumably!